Disaster Resilience in New Zealand: What we can learn from Australia.

As climate risks and disasters intensify and our infrastructure ages, ensuring the disaster resilience of critical infrastructure comes at a cost, but who should bear it?

At Infrastructure New Zealand’s Infrastructure Resilience Conference, James Russell, Sector Director – Finance and Insurance, spoke alongside colleagues Chris Perks, Sector Director – Transport and Delivery Partners, and Sean O’Meara (BDO). The panel discussed how Australia has approached the funding, financing, and governance of infrastructure resilience, drawing lessons for New Zealand.

Continuity planning empowers businesses to adapt, recover, and thrive

Businesses often struggle to recover from extreme weather events and natural hazards because they are not ready.

It has been estimated that 40% of small and medium-sized enterprises (SMEs) do not reopen after a disaster, and many of those that do fail within a year. Businesses need to rethink their operating models before disruptions happen. Yet building disaster resilience does not always have to require a resource-intensive process or lead to something new. It does not mean changing what a business does, but how it does it. This is where business continuity planning comes in.

New study shows rapid cloud loss contributing to record-breaking temperatures

Earth’s cloud cover is rapidly shrinking and contributing to record-breaking temperatures, according to new research involving the Monash-led Australian Research Council Centre of Excellence for 21st Century Weather.

The research, led by the United States’ National Aeronautics and Space Administration (NASA) and published in Geophysical Research Letters, analysed satellite observations to find that between 1.5 and 3 per cent of the world’s storm cloud zones have been contracting each decade in the past 24 years.

Most finance ministries are concerned about the physical impacts of climate change, and the implications of the transition away from fossil fuels, according to the results of a major survey published today (9 June 2025) by the Coalition of Finance Ministers for Climate Action.

However, finance ministries are finding it difficult to incorporate climate change into their economic analyses and face many challenges in taking it into account in their decision-making.

The objective of this brief is to provide an analytical overview of the current and projected drought conditions across central and northern Europe, northern Africa, the eastern Mediterranean, and the Middle East.

Some areas have been experiencing more severe alert drought conditions, particularly in the Mediterranean region, including south-eastern Spain, Cyprus, and most of North Africa, as well as central and south-eastern Türkiye and the Middle East. Alert conditions are rapidly intensifying in large areas of Ukraine and in the neighbouring countries, impacting crops and vegetation. Similar conditions are emerging in some areas of central Europe, the Baltic, and the UK.

Artificial Intelligence approaches for disaster risk management

This brief explores AI capabilities to support the EU’s prevention, preparedness, and resilience-building strategies, including the Preparedness Union Strategy. Efforts focus on enhancing information and image processing, advancing AI-driven risk assessment, and strengthening early warning systems.

The goal of the report is to show how understanding social vulnerability can help policymakers to prioritize climate investments, design projects and programs to be more inclusive, and create tailored initiatives that make households and communities stronger and more resilient overall. It highlights how social vulnerability puts some people in harm’s way or prevents them from finding safety; limits their access to resources for adaptation; and constrains their agency and their voice. Poverty is a key factor, but so is social exclusion.

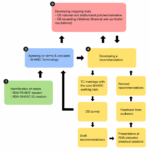

Multi-hazard early warning system – Global Disaster Alert and Coordination System

This report presents the updates and describes the Multi-Hazard and Early Warning System component (MHEWS) of the Global Disaster Alert and Coordination System (GDACS). This report focuses on the methodology underpinning the GDACS score employed across the seven hazards covered by the MHEWS.

GDACS events are produced automatically or semi-automatically for each hazard independently, using dedicated algorithms and the data available, with expert supervision. Every event on GDACS features a score and colour, based on the estimated risk that the given event poses to the exposed population and affected area

The Workshop will introduce concepts and tools to help ensure effective governance, disaster-related data management, planning and finance mobilization for local-level disaster risk reduction (DRR), resilience and climate action. It will provide a comprehensive understanding of concepts, tools and approaches for risk understanding and loss and damage assessment, integrated planning, institutional strengthening across different levels of governance, as well as finance mechanisms to support disaster risk reduction and climate action, with particular focus on response to loss and damage.

[MCR2030 Webinar] Using MCR2030 Dashboard to Strengthen Engagement with Cities

The Making Cities Resilient 2030 (MCR2030) initiative is a global partnership that supports cities in strengthening disaster and climate resilience. A key tool available for its cities and partners is the MCR2030 dashboard, an online platform designed to help cities assess their resilience, share insights, and monitor progress along the resilience roadmap. The dashboard also facilitates city’s access to useful tools and resources provided by MCR2030 service providers which further support cities in achieving their resilience goals in line with broader global frameworks such as the Sendai Framework, the Paris Agreement, and the Sustainable Development Goals.

4th International Conference on Financing for Development

The Fourth International Conference on Financing for Development (FFD4) provides a unique opportunity to reform financing at all levels, including to support reform of the international financial architecture and addressing financing challenges preventing the urgently needed investment push for the SDGs. FFD4 Conference will be held in FIBES Sevilla Exhibition and Conference Centre.